Exhibitions, Research, Criticism, Commentary

A chronology of 3,585 references across art, science, technology, and culture

“Even the word cypherpunk, I think it’s at least two-thirds gentrified at this point.”

“Today Is a Good Day to Discuss Digital Rights” at Espacio Fundación Telefónica Madrid features Aram Bartholl, Eva & Franco Mattes, United Visual Artists, and others, exploring online power dynamics through humour and provocation. Curated by Fundación Telefónica with Domestic Data Streamers, the show’s installations articulate how online actions shape (and erode) rights—from expression and data control to identity and the right to be forgotten.

“Data breaches are a perpetual concern with any data collection. Biometrics magnify that risk because your face cannot be reset, unlike a password or credit card number.”

“It’s not that these guys are so smart that they’re running circles around Congress. It’s that Congress created the enshittogenic environment and then we got enshittocene.”

“Projects involving mixers, zero-knowledge proofs, multi-party computation, and other privacy-preserving protocols could face existential legal risk—not for what they do, but for how someone uses them.”

“You’re asking millions of people to submit sensitive information to access legal content. That opens the door to leaks, abuse, and misuse of data.”

“Anything you put online can and probably has been scraped,” concludes AI ethics researcher William Agnew after finding thousands of personal documents in a tiny sample of DataComp CommonPool. The massive dataset, used to train image generation models, likely contains hundreds of millions of private photos, IDs, and résumés scraped from the web. As journalist Eileen Guo notes, the findings expose “the original sin of AI systems built off public data—it’s extractive, misleading, and dangerous.”

“As a paid exhibition, visitors invested time and money to support the artist and institution without damaging artworks or disrupting operations. Our photos were taken in good faith, reflecting genuine engagement with the exhibition.”

“There is a Latin America testing ground for products. If they are successful, they tend to be deployed in other jurisdictions, oftentimes with additional safeguards, sometimes not.”

“America already has all the technology it needs to build a draconian surveillance society—the conditions for such a dystopia have been falling into place slowly over time, waiting for the right authoritarian to come along and use it to crack down on American privacy and freedom.”

“If a government comes knocking at Telegram’s door asking for information on a wrongdoer, real or perceived, Telegram doesn’t have the same safety that its peers do. An end-to-end encrypted service can sincerely tell law enforcement that it can’t help them.”

“We need acts of translation, and I think artists are supplying images and metaphors that we can use as a common currency. These metaphors are alternatives to those offered by corporations.”

Simone C Niquille’s CGI film Beauty and The Beep (2024) premieres at EXPOSED Torino Foto Festival (IT), completing the Dutch artist’s trilogy on cohabitation with computer vision. Following an AI-trained computer model of a ‘smart chair’ trying—struggling—to find a place to sit, the film playfully collages evidence of the modern datafied home: The chair is designed after Bertil, the first IKEA product advertized with synthetic imagery while the parcour resembles Boston Dynamics’ model home for robot dogs.

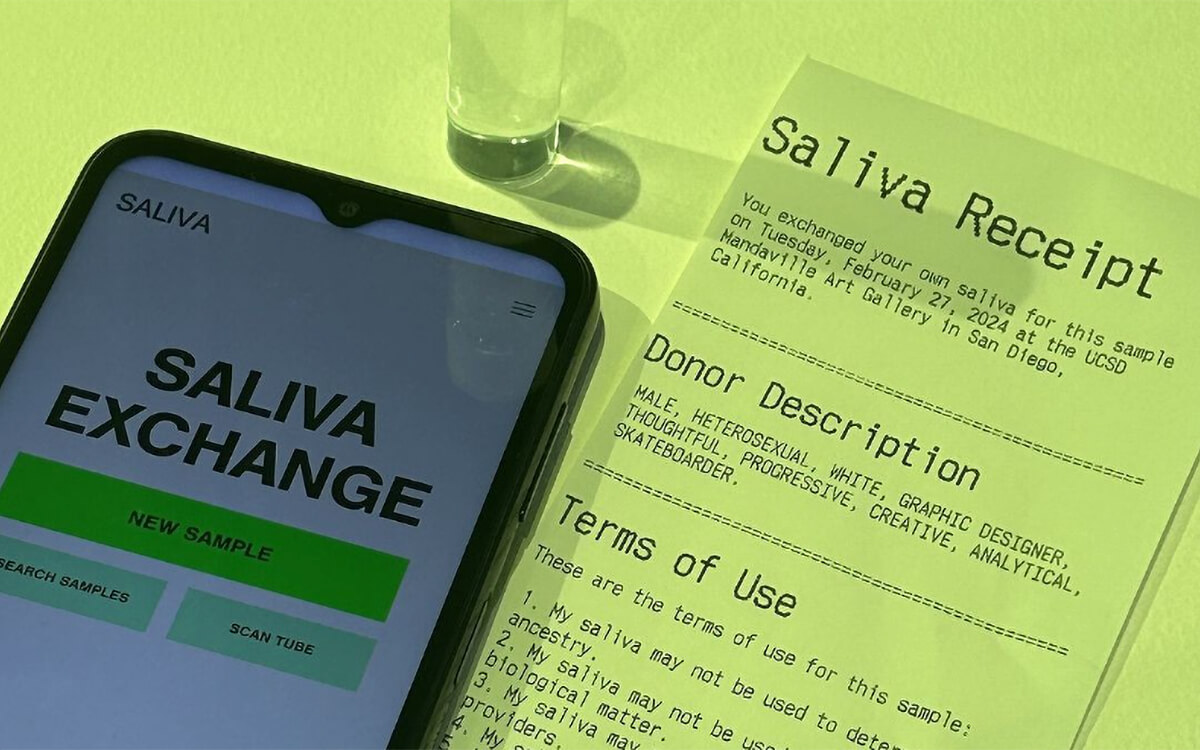

UC San Diego’s Mandeville Art Gallery opens “Bodily Autonomy,” Lauren Lee McCarthy’s largest solo show in the U.S. to date. Curator Ceci Moss brings together two major series of works—Surrogate (2022) and Saliva (2022)—in which the Chinese-American artist examines bio-surveillance through performances, videos, and installations. A newly commissioned Saliva Bar, for example, invites visitors to reflect on data privacy, race, gender, and class as they pertain to genetic material over traded spit samples.

“The growing awareness that unchecked centralization and over-financialization cannot be what ‘crypto is about,’ and new technologies like second-generation privacy solutions and rollups are finally coming to fruition, present us with an opportunity to take things in a different direction.”

The third edition of Japan’s Osaka Kansai International Art Festival ponders urban futures with a group exhibition that asks “STREET 3.0: Where Is The Street?” Curators Miwa Kutsuna and Yutaro Midorikawa present works by international artists that hack the city with technology (Aram Bartholl, Simon Weckert, AQV-EIKKKM), calligraphy, or olfactory. Bartholl’s over 1,400 node-strong network of Dead Drops (2010-, image), for example, inserts USB flash drives into the urban landscape for offline data sharing.

“On the whole, despite the ‘dystopian vibez’ of staring into an orb and letting it scan deeply into your eyeballs, it does seem like specialized hardware systems can do quite a decent job of protecting privacy.”

Worldcoin, a proof-of-personhood digital identity system for a future full of AI agents, launches. A Tools for Humanity (OpenAI’s Sam Altman and engineer Alex Blania) initiative, it proposes iris scanning everyone on earth to assign them an anonymized biometric identity—and a related cryptocurrency. Anticipating AI-induced cultural shifts, Altman & Blania claim Worldcoin will let users “prove you are a real and unique person online” and assist in universal basic income (UBI) disbursement.

Daily discoveries at the nexus of art, science, technology, and culture: Get full access by becoming a HOLO Supporter!

- Perspective: research, long-form analysis, and critical commentary

- Encounters: in-depth artist profiles and studio visits of pioneers and key innovators

- Stream: a timeline and news archive with 3,100+ entries and counting

- Edition: HOLO’s annual collector’s edition that captures the calendar year in print